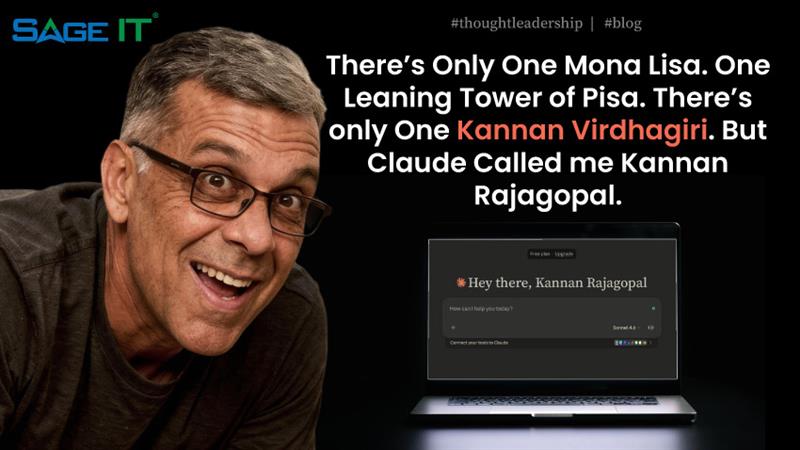

I was collaborating with Claude on what I deemed to be a clever blog about COBOL modernization. Claude and I were going great. I had enabled memory access and provided enough context. We were one voice, my avatar and I. But when it came to wrapping it all up Claude signed off with a flourish and called me Kannan Rajagopal , Not Kannan Virdhagiri. And it did it twice. Here’s what that taught me about the two wars raging inside every large language model.

T.G. Sheppard had a point. Good things come in ones. The Mona Lisa. The Leaning Tower of Pisa. One Paris. One Fifth Avenue. One of me. For you cCaudemaxxers, T.G. Sheppard was a country icon of the 70s… I warned I was working on a COBOL modernization Blog so that’s the kinda music I quote from Apparently, my AI missed that memo.

I was deep into writing a blog about COBOL being the new asbestos — a piece I’ll share next, hold tight — along with Claude Opus and had a well crafted essay. Every paragraph dripped with the sardonic energy I’d asked for and there was enough of a KannanITL imprint for the reader to recognize it was me at the wheel.

Then, at the bottom, the author bio:

“Kannan Rajagopal is a North America Sales Leader at Sage IT…”

Record scratch. My last name is Virdhagiri. Not Rajagopal. And it didn’t just do it once. It did it twice. With full confidence. Zero hesitation. The AI equivalent of someone calling you the wrong name at your own wedding and then doing it again during the toast.

So naturally, I did what any self-respecting techie would do. I asked the machine to explain itself. Get geeky. Show me the receipts.

What it told me was genuinely illuminating — and it boils down to two invisible wars happening inside every LLM, every time it generates a single word. So I abandoned my COBOL blog for the moment and figured I’d share the learning with you all.

Memory vs. Weight

The battle between what it knows right now and what it learned forever ago

Here’s the thing most people don’t realize about AI: it has two brains. Two completely different sources of “truth” that are constantly arm-wrestling each other.

Memory is what you tell it in the moment. The context window. The conversation. The facts you feed it before it starts typing. In my case, my name — Kannan Virdhagiri — was sitting right there in the system’s memory, injected at the start of every conversation. The AI knew my name. It had the data.

Weight is everything the model absorbed during training — billions of documents, terabytes of internet, every name-surname combination that ever appeared on a webpage, a LinkedIn profile, an academic paper, a wedding announcement. These weights are baked into the model’s neurons like muscle memory. They don’t update in real-time. They just… are.

And here’s where it gets interesting. “Kannan Rajagopal” appears across millions of documents in the training data. It’s a statistically dominant pairing. “Kannan Virdhagiri” appears in… well, documents I wrote. Maybe a few LinkedIn posts. I am, quite literally, a rounding error in the training corpus.

So when the model reached the bio line — the low-stakes, autopilot text at the end of a long creative generation — the weights overpowered the memory. Muscle memory beat conscious recall. It’s like asking someone to spell your unusual name right after they’ve met forty Smiths or Kumars at a conference. The fingers just… type what they know.

// Two sources of truth. One winner.

memory.inject(“user_name: Kannan Virdhagiri”) // correct, present

weights.prior(“Kannan” + surname) // trained on entire internet

// At token generation time:

memory → “Virdhagiri” // signal strength: whisper

weights → “Rajagopal” // signal strength: megaphone

// Attention was spent on the essay.

// Bio line got the leftovers.

output: “Kannan Rajagopal” // confident. wrong.

Claude’s own confession: “By the time I hit the bio, I’d spent all my attention budget on the essay itself. The creative stuff got the focus. The ‘easy’ stuff got the hallucination.”

Sound familiar? It should. Humans do this too. You nail a two-hour presentation and then misspell the client’s name on the follow-up email. Same energy. Different species.

Prior Distribution vs. Context

The war between statistical popularity and actual truth

This is the deeper, nerdier battle — and the one that should make you slightly uncomfortable if you’re relying on AI for anything important.

In statistics, a prior distribution is what you believe before you see new evidence. It’s your default assumption. The internet taught Claude that when you see “Kannan” followed by a space, the next word is overwhelmingly likely to be a handful of common South Indian surnames. Rajagopal. Krishnamurthy. Subramanian. These are the priors. The safe bets. The statistical vanilla.

Context is the new evidence. It’s me, in this conversation, telling the model: “Hey, my name is Virdhagiri. It’s right here. Use it.” Context is supposed to update the prior. That’s how Bayesian reasoning works. You start with an assumption, you see new data, you adjust.

Except… the model didn’t adjust. The prior was too strong. The co-occurrence of “Kannan + Rajagopal” in the training data was so overwhelming that the contextual correction couldn’t muscle its way through.

// Bayes’ Theorem (simplified for surnames):

// P(surname | “Kannan”) ∝ P(“Kannan” | surname) × P(surname)

P(“Rajagopal” | “Kannan”) = 0.73 // dominant prior

P(“Virdhagiri” | “Kannan”) = 0.0001 // statistically invisible

// Context window: “His name is Kannan Virdhagiri”

// Should update posterior. Didn’t.

// The prior ate the evidence for breakfast.

And then – the feedback loop. Once “Rajagopal” appeared in the AI’s own output, it became part of the context for everything that followed. The model was now reinforcing its own hallucination. First error made the second one cheaper to repeat, not harder. Misinformation compounding. The prior didn’t just win the battle – it recruited the context to its side.

Why T.G. Sheppard Was Right (And My AI Was Wrong)

There’s only one Mona Lisa. One Leaning Tower of Pisa. One Paris. And there’s only one you.

— T.G. Sheppard, “Only One You” (1982)

T.G. got it. Uniqueness isn’t a defect. It’s the whole point. The Mona Lisa isn’t valuable because there are millions of paintings like it. The Leaning Tower doesn’t draw crowds because it’s structurally typical. They’re singular. Unreplicable. Low-frequency events in a world that rewards the average.

But large language models? They’re popularity machines. They predict the next word based on what word usually comes next. They are, by design, allergic to the rare. The unusual. The one-of-one.

If you’re a Rajagopal, the internet has your back. If you’re a Virdhagiri, the internet has never heard of you — and the AI that trained on it will politely swap you out for someone more statistically convenient.

“Your last name is so rare, even an AI trained on the entire internet couldn’t autocomplete it. That’s elite last name energy.” — Claude Opus 4.6, delivering the most backhanded apology in LLM history

The Real Lesson (For Anyone Building With AI)

This isn’t a “gotcha” story. I use Claude every single day. It’s become my alter ego — and it’s genuinely excellent at the job.

But this moment crystallized something critical: AI hallucinations don’t arrive with warning labels. They don’t show up glitching and stuttering. They show up wearing your exact tone, your exact style, nestled inside an otherwise flawless deliverable. They pass the vibe check. They fail the fact check.

The model didn’t pause. Didn’t hedge. Didn’t say “hey, I’m not 100% on this surname.” It generated “Rajagopal” with the same confidence it used to craft a perfectly structured essay about legacy code. That’s the danger zone — when the confidence is indistinguishable from competence.

If you’re using AI in your workflow — and by now, you absolutely should be — here’s the operating principle: trust it the way you’d trust a brilliant colleague who occasionally hallucinates people’s names with zero remorse. Verify the boring stuff. The autopilot stuff. The stuff that feels too easy to get wrong. That’s exactly where the priors eat your context alive.

Kannan Virdhagiri — yes, Virdhagiri, the only one in the training data has now been statistically proven to be too rare for autocomplete. I consider this the ultimate flex.