If your delivery model is still losing time to manual handoffs, slow reviews, testing delays, and legacy-heavy maintenance, the cost is already compounding. Thoughtworks says up to 70% of engineering time can get absorbed by manual friction and “keeping the lights on,” which means too much effort goes into maintaining flow instead of moving releases forward.

AI-first software delivery is an approach where AI is built into the software delivery lifecycle to improve how software moves from requirements to release, rather than being used only as a coding aid. It helps stop that drag at the workflow level by extending AI into requirements, testing, governance, and release so work moves with less wait time, fewer avoidable defects, and less cleanup after deployment.

AI-First Software Delivery Benefits

Faster Delivery Cycles

Release speed usually slows long before deployment. Work gets stuck in planning gaps, review queues, test delays, and release preparation. Embedding AI across those stages helps reduce waiting time between steps and creates a cleaner path from idea to production.

Fewer Production Bugs

Production issues become more expensive the later they are found. Earlier validation, stronger test generation, and better risk detection help teams catch more defects before release instead of absorbing bug cleanup after deployment.

Shorter Testing and Feedback Loops

Feedback speed often decides how fast delivery can actually move. Faster test creation, better test selection, and tighter validation cycles help keep QA from becoming the next bottleneck once development output increases.

Better Release Reliability

Release confidence drops when teams cannot clearly see what is safe to ship. Better visibility into readiness, dependency risk, validation quality, and deployment risk improves trust in the release process before production impact grows.

Lower Engineering Waste

Too much engineering time still disappears into manual coordination, repetitive work, and keeping aging systems moving. Reducing that friction gives teams more room to focus on actual delivery instead of maintaining flow through avoidable overhead.

Stronger Developer Focus

Boilerplate work, repeated documentation effort, and routine validation drain attention from higher-value engineering work. Removing more of that load helps teams spend more time on architecture, product logic, and harder delivery decisions.

Faster Onboarding and Knowledge Access

New team members lose time when system context is scattered or difficult to reconstruct. Better access to documentation, code understanding, and workflow context helps people become productive faster across unfamiliar parts of the environment.

Lower Delivery Costs

Cost pressure rises when delays, rework, defect cleanup, and maintenance overhead keep compounding across releases. Reducing manual drag across the workflow improves cost efficiency without relying only on more headcount.

Better Scalability Across Teams

Local gains often fail to spread when each team adopts different tools, controls, and working methods. More consistent workflows, shared controls, and repeatable rollout patterns make broader scale easier to support.

More Capacity for Innovation

Manual delivery overhead limits how much time teams can give to product improvement and strategic work. Freeing engineering capacity from routine operational drag creates more room for experimentation, modernization, and higher-value development.

Better Risk Visibility

Hidden risk usually surfaces late, when correction is more disruptive and more expensive. Earlier signals around quality, security, coverage, architecture, and readiness help teams respond before those issues become broader delivery problems.

Stronger Business Agility

Speed only matters when teams can respond to change without increasing instability. A more adaptive delivery flow makes it easier to move faster, adjust priorities, and sustain progress under real operating conditions.

Why Coding Copilots Alone Will Not Improve Software Delivery Outcomes

Faster coding does not automatically mean faster software delivery. You can increase developer output and still see work slow down in backlog readiness, review cycles, testing, approvals, and release coordination.

That is where many organizations get stuck. The gain is real, but it stays local. Code moves faster while the rest of the lifecycle absorbs more pressure, more handoffs, and more rework. The result is uneven throughput and weaker business impact than the early productivity signals suggested.

The issue is not that coding copilots lack value. It is that delivery outcomes improve only when AI supports the system around development, not just development itself.

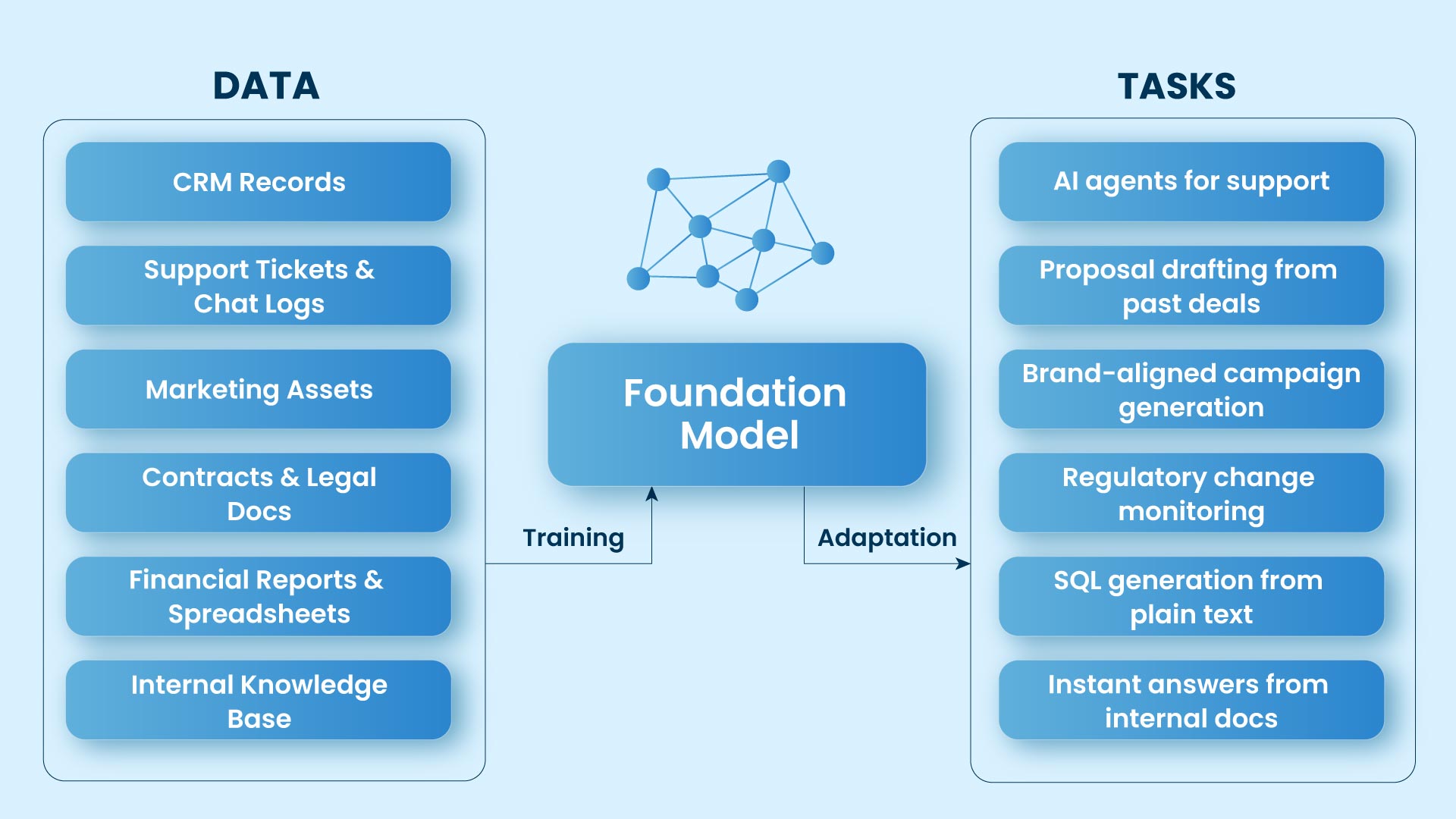

Why Organizations Are Rebuilding Software Delivery Around AI

Software delivery is under more pressure than the old model was built to handle. Teams are working across more systems, more handoffs, and tighter release expectations while still being asked to improve speed, quality, and cost control at the same time.

That strain shows up quickly. Backlogs grow, coordination gets heavier, and manual review points become harder to keep moving without slowing the rest of delivery down. Adding more effort rarely fixes the core issue because the operating model itself is starting to drag.

That is why organizations are rebuilding software delivery around AI. The shift is less about adopting new tools and more about restoring flow, improving control, and creating a delivery model that can scale with the environment around it.

The Biggest Barriers to Scaling AI Across the Delivery Lifecycle

AI adoption often spreads faster than delivery maturity. You may see strong progress in coding, testing, or content generation, yet still struggle to move work cleanly across requirements, approvals, release, and ongoing improvement.

That is where scale starts to stall. Tooling becomes fragmented, teams adopt AI unevenly, governance trails behind usage, and leaders lose visibility into what is actually creating value versus what is increasing risk. The result is more activity without the system-wide lift the business expected.

Scaling AI across the delivery lifecycle takes more than broader usage. It requires a coordinated model for control, rollout, readiness, and measurement so gains in one part of delivery do not create drag, rework, or blind spots elsewhere.

What a Governed AI Delivery Operating Model Looks Like

A governed AI delivery model keeps work moving faster without giving up control. In practice, that means AI is allowed to participate where speed adds value, but the model is designed with clear boundaries around what can run automatically, what needs approval, and where human review must stay close to execution.

That operating model depends on visibility as much as policy. You need to see what AI is doing, how decisions are being made, where exceptions appear, and when intervention is required. Governance becomes real when auditability, approvals, runtime oversight, and release controls are built into the workflow itself. That is what makes AI delivery easier to trust, easier to scale, and safer to use in real enterprise environments.

Platform Engineering for AI: The Missing Layer Between Pilots and Scale

Pilots can prove that AI works, but they do not create enterprise scale on their own. Scale becomes practical when teams can use approved models, workflows, and controls through a shared delivery layer instead of rebuilding access, policy, and orchestration from scratch each time.

That is where platform engineering matters. It gives you a more consistent way to roll out AI across teams, reuse what already works, enforce governance closer to execution, and keep visibility into how AI is being used. In real terms, it is the layer that turns scattered experiments into a delivery capability the enterprise can trust and expand.

AI-First Software Delivery Use Cases Across the Lifecycle

Requirements Validation and Planning

Backlog flow usually slows before coding starts. Converting stakeholder input, product goals, and existing documentation into clearer user stories, acceptance criteria, and technical requirements reduces that drag. The result is shorter refinement cycles and fewer unresolved ambiguities before delivery begins.

Regression Test Generation and Intelligent Testing

Test coverage is one of the first places where speed breaks down. Generating regression, unit, and integration tests from code changes and requirements keeps this stage moving, while sharper test selection keeps teams focused on the checks most likely to matter. That preserves tighter feedback loops without pushing all added delivery volume onto QA.

Release Readiness Checks and Deployment Risk Prediction

What blocks release speed is often not the code itself, but uncertainty around whether the release is actually safe to ship. Analyzing code changes, dependency risk, test outcomes, and failure patterns before deployment gives teams a clearer signal on what is ready, what needs attention, and what could create avoidable rollout issues later.

Technical Debt Analysis and Continuous Refactoring

Hidden drag rarely appears as one major failure. It builds through small design compromises, brittle dependencies, and unresolved cleanup across releases. Earlier visibility into code smells, architectural drift, and refactoring priorities reduces that accumulated drag before it starts slowing future delivery.

Legacy Code Understanding and Modernization

Modernization usually stalls when teams cannot see what older systems are actually doing. Generated documentation, dependency mapping, and clearer visibility into system behavior across large or poorly documented codebases make it easier to define lower-risk modernization steps without freezing delivery elsewhere.

Documentation Generation and Knowledge Access

Knowledge gaps slow delivery every time teams have to stop and reconstruct context. Better documentation, inline explanations, and easier retrieval of system context keep that from turning into routine overhead. This reduces dependency on tribal knowledge and makes it easier for new team members to become productive faster.

Architecture and Design Validation

Scalability and reliability problems often begin much earlier than production. Checking architecture and design choices for pattern fit, consistency, and risk signals before those issues spread downstream makes review more proactive and reduces the cost of correcting structural mistakes later.

Code Generation, Refactoring, and Debugging

Repetitive build work still consumes too much engineering attention in many teams. Context-aware code generation, repetitive refactoring, and faster debugging reduce that load. The gain is not only faster coding, but cleaner development flow without spending the same level of effort on low-value repetition.

Pipeline Optimization and Delivery Flow Improvement

CI/CD pipelines become bottlenecks when build, validation, and deployment steps no longer keep pace with upstream work. Closer analysis of delivery paths, bottlenecks, and workflow movement improves release flow and reduces the waiting time that often accumulates between development and deployment.

Incident Detection and Automated Response

Production issues become more expensive the longer they remain hidden or unresolved. Earlier telemetry monitoring, anomaly detection, and likely-cause identification improve visibility before minor issues expand into broader service problems. In more mature environments, faster response and corrective action also strengthen resilience without adding more manual firefighting.

Why Workforce Redesign Matters as Much as the Technology Stack

AI-first delivery works best when the team model evolves with the technology model. Faster execution only becomes reliable value when developers, architects, product teams, QA, and delivery leaders know how to use AI consistently, where to apply judgment, and how to keep workflow ownership clear as work moves faster.

That is why workforce redesign matters as much as the technology stack. AI fluency, stronger orchestration skills, and role clarity help teams turn acceleration into better delivery outcomes instead of uneven adoption, weak decisions, or new coordination friction. Without that shift, even a strong platform and governance model will underperform.

A Practical Roadmap for Adopting AI-First Software Delivery

AI-first software delivery should not begin with broader AI rollout. It should begin where your delivery workflow is already slowing down, because that is where AI can create visible improvement without creating uncontrolled disruption.

Start where delivery friction is already visible

Look first at approval delays, testing bottlenecks, review overload, release coordination issues, brittle dependencies, and unclear handoffs. AI creates the most value when it is applied to work that is already causing measurable drag.

Prove value in a few high-impact workflows

Choose a small number of workflows where improvement will be visible quickly. Focus on repeated manual effort, slow decisions, or coordination-heavy work that affects delivery flow. Early value matters more than broad exposure.

Set execution boundaries before scale

Define where AI can act independently, where human review is required, and which actions need traceability. This is what prevents review pressure, ownership confusion, and governance lag from growing as usage expands.

Build the shared foundation for reuse

Once early workflows are working, create the common layer that supports wider rollout. Reusable workflow patterns, approved model access, common controls, and orchestration standards are what stop local success from breaking apart across teams.

Prepare teams for a different delivery model

AI-first delivery changes how work is created, reviewed, approved, and escalated. Teams need clearer decision roles, stronger judgment around AI-assisted work, and more consistent operating discipline so adoption does not stay uneven or depend on a few highly capable people.

Scale through outcomes

Expand only when early workflows are performing reliably under real delivery conditions. The right signals are stronger quality, less rework, better release resilience, lower coordination overhead, and faster movement across the lifecycle.

What usually challenges adoption in real time?

How Do We Keep These Problems From Disrupting Your Deployment?

You prevent these problems from hurting deployment by making rollout governed, phased, and workflow-led from the start. Our Intelligent Software Delivery Solution helps you do that by: