Building an AI agent has become deceptively easy.

A strong team can connect a frontier model to a few tools, add retrieval, layer in some prompt logic, and deliver a compelling demo in days. That speed has created a dangerous illusion in the market: if the first agent comes together quickly, the underlying infrastructure must be manageable too.

That assumption is where many enterprise AI initiatives begin to drift off course.

Because building an agent is not the same as building the infrastructure required to run agent systems reliably in production. One is a prototype milestone. The other is an operating commitment.

That distinction matters more in 2026 than it did even a year ago. Agentic systems are no longer evaluated only on whether they can produce an answer or complete a task. They are increasingly judged on whether they can operate safely, integrate cleanly, remain observable under pressure, and evolve without creating an expanding maintenance burden. This is exactly the tension captured in your source framing: the build-versus-platform decision is not just about features, but about the hidden integration tax that shows up in engineering time, maintenance overhead, and reliability risk.

That is the real story behind AI agents right now. The market is still full of prototype optimism, but the harder enterprise question has already arrived: who is going to own the operational complexity?

Most teams are still evaluating the wrong problem

Many organizations still frame the decision as a classic build-versus-buy exercise at the demo layer.

They compare model support, workflow flexibility, tool connectors, and licensing cost. Those factors matter, but they are not what determines whether an agent strategy scales. The real issue is what happens after the first deployment works well enough to win internal confidence.

That is when the economics change.

A prototype proves possibility. It does not prove durability. Once agents are placed inside real workflows, they inherit all the messy properties of enterprise systems: permissions, latency, exception handling, policy constraints, inconsistent data quality, evolving tools, and unpredictable user behavior. At that point, the problem is no longer “Can we build this?” It becomes “How much undifferentiated infrastructure do we want to own?”

That is why the platform conversation has become more urgent. In 2026, the ecosystem itself is signaling that agent infrastructure is becoming its own layer of architecture. MCP is gaining traction as a standard for connecting models to tools and external systems, while A2A reflects the growing need for multi-agent interoperability across environments. Anthropic frames MCP as an open standard for connecting AI systems to the places where context and actions live, and Google introduced A2A to support more standardized coordination among agents.

In other words, the industry is moving beyond isolated assistants. Enterprises are starting to build distributed agent systems whether they intended to or not.

The integration tax is where DIY enthusiasm meets reality

The hidden cost of building your own agent infrastructure is rarely the model bill. It is the surrounding system you have to keep building once the prototype leaves the lab.

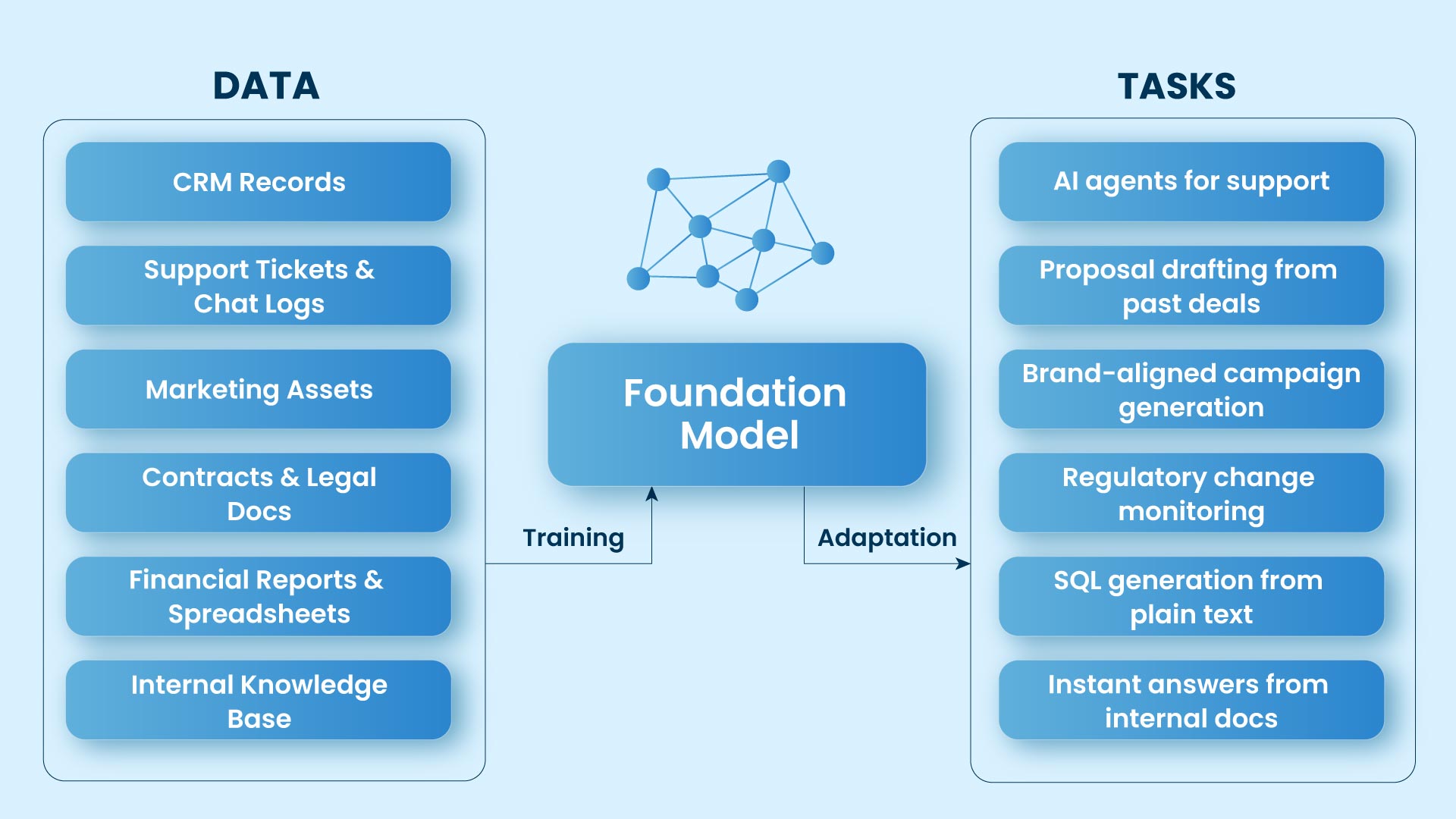

Connectivity is the first place this becomes obvious. Enterprise agents need access to files, tickets, repositories, internal knowledge, SaaS tools, APIs, and operational systems. Every one of those connections looks manageable on a roadmap. In production, each becomes its own failure surface. Teams are not just wiring APIs. They are managing authentication, permissions, retries, context windows, latency, state handoffs, fallback logic, and recovery paths across distributed workflows.

Then governance enters the picture. What can an agent see? What can it trigger? What must it redact, escalate, or avoid entirely? These questions tend to get postponed because they do not make demos more exciting. But your source material is right to call out guardrails as one of the first things DIY teams skip. Skipping them does not remove the work. It simply delays it until the cost of getting it wrong is higher.

Observability is where many internal builds start to feel fragile. Traditional software usually fails loudly. Agent systems often fail ambiguously. A tool call may silently degrade. A retrieval step may miss critical context. A model may produce plausible but incorrect reasoning. A workflow may work nine times and fail on the tenth because some hidden assumption finally breaks. That is why modern LLM observability tooling increasingly centers on traces, runtime debugging, latency, and cost analysis rather than simple logs. Platforms like Langfuse position tracing and evaluation as core infrastructure for understanding how LLM applications actually behave in production.

Interoperability only magnifies the challenge. A single agent can survive on custom glue code for a while. A portfolio of agents cannot. As soon as different agents begin handing work across specialized tasks, brittle integrations turn into architectural drag. Google’s A2A push makes that shift explicit: multi-agent systems need more standardized ways to discover capabilities, exchange work, and coordinate across tools and environments.

What many teams call “building our own stack” is often just the early phase of inheriting a much larger infrastructure burden.

Our Archestra AI Deployment provides a streamlined, integrated platform that alleviates the operational complexities of deploying AI agents. By automating integrations, governance, and observability, our solution enables enterprises to deploy AI agents efficiently, without the hidden costs or ongoing maintenance of managing complex infrastructure. This allows your team to focus on innovation while our platform handles the operational heavy lifting.

The hidden monster most roadmaps ignore: evaluation

Of all the costs that surface in DIY agent systems, evaluation is the one most often recognized too late.

Teams plan for the agent, the workflows, and maybe the connectors. What they do not fully plan for is the eval loop required to keep the system trustworthy once it starts changing.

And it always changes.

Prompts get refined. Models get swapped. Tool schemas evolve. Business rules shift. A small update in one agent can degrade the output quality of another. A tool change can quietly break a handoff. A model upgrade can alter reasoning patterns just enough to create regressions that no one sees until customers or employees hit them in production.

This is why eval-driven development is becoming essential rather than optional. OpenAI’s current eval guidance focuses on production concerns such as prompt regression detection, tool evaluation, and designing evaluation loops that support real system iteration. The broader ecosystem has also normalized LLM-as-a-judge techniques for quality assessment where deterministic tests fall short. Langfuse likewise emphasizes evaluation workflows that combine model-based scoring, human review, and custom metrics to track quality and drift over time.

This is not a minor implementation detail. It is one of the hidden monsters of DIY infrastructure. If you cannot test changes across hundreds or thousands of representative runs, you do not really control the system. You are simply hoping it keeps working.

And hope is not an infrastructure strategy.

What gets postponed early becomes expensive later

This is why your source document’s warning is commercially sharp: in build-it-yourself environments, guardrails get skipped, observability gets omitted, and A2A often never gets built for the long tail.

That pattern is understandable. Teams under pressure prioritize visible progress. They build the part that proves value fastest. But in agent systems, the invisible layers are often the ones that determine whether value can survive contact with production.

Sooner or later, those postponed layers come due.

That is when organizations realize they did not avoid the platform problem. They internalized it. What looked like a tactical build starts behaving like a multi-quarter infrastructure program, complete with governance work, runtime tracing, eval pipelines, integration maintenance, and interoperability debt.

This is the trap. DIY feels cheaper when teams price only the first mile. It looks very different when they price the miles that follow.

The strategic question is not whether you can build it

Of course, many teams can build their own agent infrastructure. That is not the strategic question.

The real question is whether owning the plumbing is where competitive advantage actually comes from. In most cases, it does not. The defensible value in enterprise AI is rarely the orchestration scaffolding itself. It lies in the proprietary data, decision logic, workflows, and domain intelligence running through that scaffolding.

That is why the platform argument is ultimately about focus. A mature platform does more than accelerate development speed. It compresses time to reliability by absorbing the undifferentiated infrastructure work that would otherwise consume internal engineering capacity. That is precisely why this positioning works in a sales conversation: it reframes the issue as the cost of inaction, not convenience.

Competitive advantage will not come from rebuilding the same guardrails, traces, eval harnesses, and coordination layers that the rest of the market is also struggling with. It will come from deploying better intelligence on top of stable, governed infrastructure.

If your best engineers are spending most of their time debugging orchestration, patching integrations, rebuilding evaluation loops, and keeping brittle workflows upright, you are not accelerating innovation. You are financing operational drag.

In 2026, the organizations that win with agentic AI will be the ones that move fastest from experimentation to governed, scalable production, and Sage IT is well positioned to help enterprises navigate that transition.