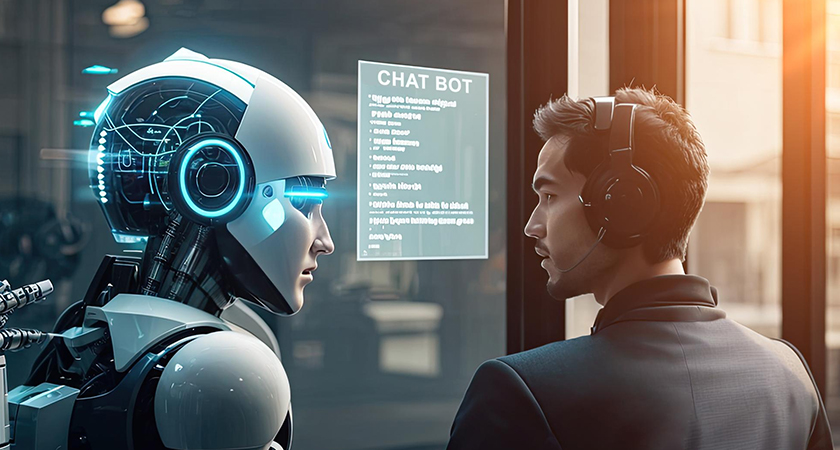

The chatbot gap is killing enterprise AI ROI.

Teams build a polished RAG assistant. It answers policy questions accurately, summarizes documents well, and performs beautifully in demos. Then a real employee asks it to check eligibility, update a system of record, or trigger a workflow, and the illusion breaks. The assistant can explain the work, but it cannot do the work.

That is where many enterprise AI initiatives stall.

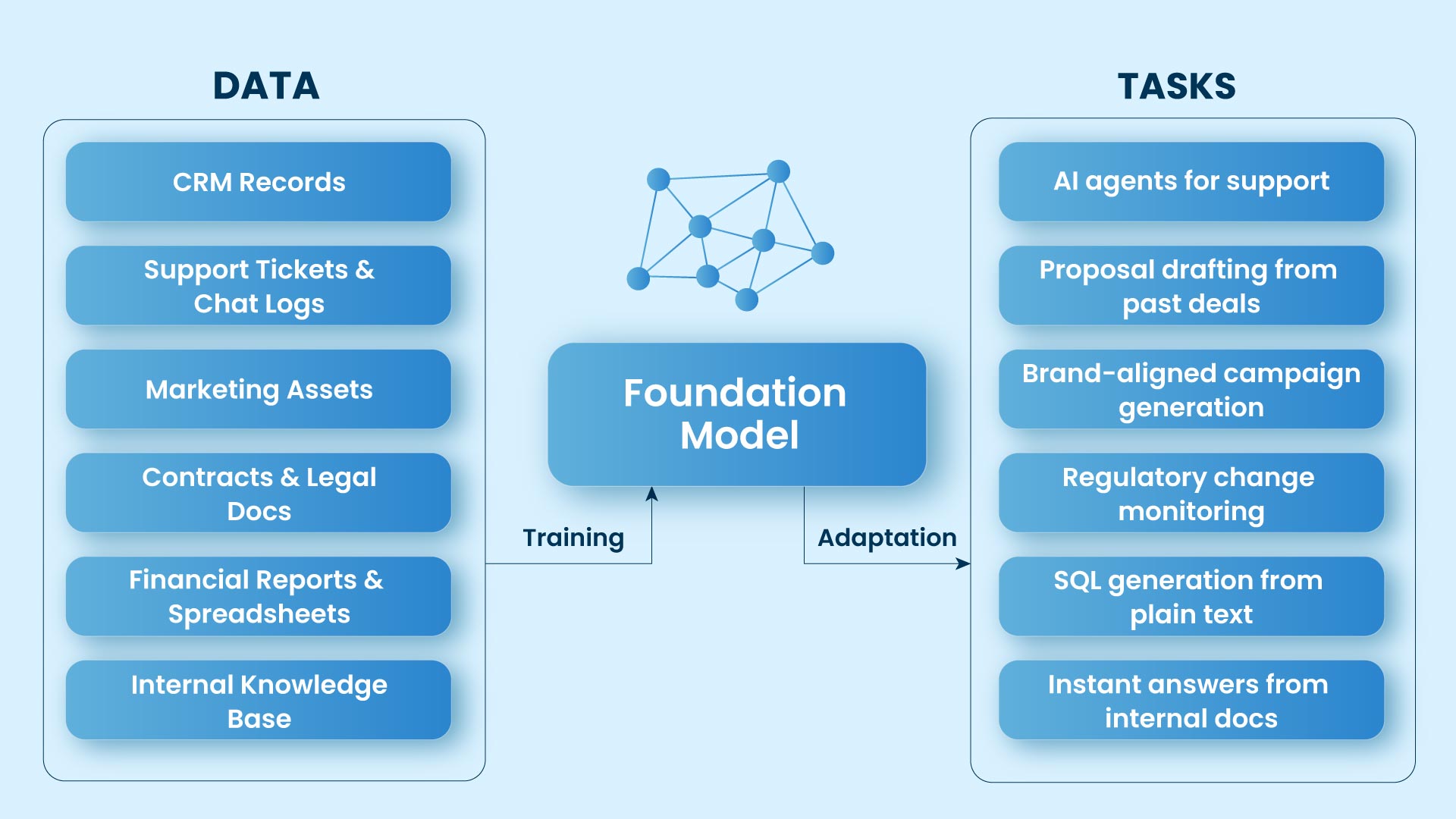

The issue is not model intelligence. It is architectural incompleteness. In enterprise environments, useful agents need three layers working together: RAG to access organizational knowledge, native tools to take action, and MCP to connect securely to enterprise systems. That framing aligns directly with your source brief, which positions knowledge, tools, and enterprise connectivity as the stack that makes agents useful.

| Layer | Analogy | Example stack | What it unlocks |

| RAG | Memory | pgvector, chunking, cosine similarity | Grounded answers |

| Native tools | Hands | web search, code execution, HTTP, file ops | Real actions |

| MCP | Nervous system | Salesforce/GitHub/data warehouse servers | Enterprise connectivity |

MCP in one line: MCP is to AI agents what USB-C is to devices, a standard way to connect to external systems without rebuilding a custom adapter every time. That “USB-C for AI” framing appears in the official MCP documentation.

Consider a simple HR request:

“Am I eligible for the quarterly remote-work bonus, and can you submit it for me?”

That single question reveals whether an AI system is merely conversational or genuinely operational.

RAG gives the agent organizational memory

Every enterprise workflow begins with context. An HR agent cannot answer correctly unless it can access the company’s actual policies rather than rely on generic model knowledge.

That is the role of Retrieval-Augmented Generation. In a typical enterprise setup, documents are broken into chunks, converted into embeddings, stored in a vector database such as pgvector, and retrieved using similarity measures such as cosine similarity. The result is grounded output tied to source material rather than unsupported generation. Your source notes explicitly call out these implementation signals: pgvector, chunking, cosine similarity, and source attribution.

In the HR example, the agent retrieves the relevant section of the company’s work-from-home policy and cites the exact rule: employees must complete 90 days in role and meet a utilization threshold to qualify.

At that point, the agent can give a grounded answer:

“Based on the policy, you appear eligible.”

Useful, yes. Complete, no.

The agent still cannot verify tenure, calculate eligibility, or submit anything into an HR platform. RAG gives the system memory, but not execution.

Native tools turn language into action

Once the policy is retrieved, the next requirement is action.

Your source doc identifies the core tool categories clearly: web search, code execution, HTTP, and file operations. These capabilities transform an LLM from a text generator into a system that can perform operational steps. OpenAI’s Responses API reflects this shift by treating tool use as a core part of agent behavior, with support for built-in capabilities such as web search, file search, code interpreter, and remote MCP tools.

In the HR workflow, the agent now uses tools to evaluate eligibility:

Now the response changes meaningfully:

“You joined 124 days ago, your utilization is above the threshold, and you are eligible. I’ve prepared the submission.”

This is the moment enterprise AI starts to feel useful. The agent is no longer interpreting policy alone; it is performing parts of the workflow.

But one limitation remains. Preparing a submission is not the same as completing it. In most organizations, the final step lives inside a system of record.

MCP connects agents to the enterprise

That is where Model Context Protocol becomes decisive.

If RAG gives the agent memory and tools give it hands, MCP provides a standardized way to reach the rest of the enterprise. Our solution, Archestra AI Orchestration, simplifies and streamlines enterprise system integration. Instead of hard-coding every connector into each agent, Archestra enables seamless connectivity across platforms like Salesforce, GitHub, and data warehouses using a standardized interface. This reduces the complexity of managing custom connectors and accelerates the deployment of operational AI agents, making the process smoother and more efficient.

GitHub already offers an official MCP server that enables repository access, issue management, pull request workflows, and automation through natural-language interactions.

Now return to the HR request one final time.

The employee asks:

“Can you submit it for me?”

A basic assistant stops at recommendation. An agent with MCP connectivity can complete the loop:

That is the difference between a chatbot and an enterprise agent.

It is also why MCP has become one of the most important developments in the agent ecosystem. OpenAI now supports remote MCP servers in its Responses API. GitHub’s MCP support in VS Code is generally available, signaling broader adoption in developer workflows. Anthropic also donated MCP to the Agentic AI Foundation under the Linux Foundation in December 2025, reinforcing its trajectory toward a more neutral industry standard.

Why this three-layer model works

Each layer solves a different enterprise AI failure point.

Without RAG, the agent lacks organizational context.

Without tools, it cannot execute tasks.

Without MCP, it cannot operate inside enterprise systems.

Put together, those layers create something fundamentally different: not a smarter chat interface, but an operational system that can reason with internal knowledge, take action, and interact with the platforms where business processes actually run.

That is the real shift underway in enterprise AI.

The winners in this next phase of enterprise AI will not be defined by who deploys the most eloquent model. They will be defined by who builds the most effective agent stack, one that combines memory, action, and connectivity.

That is how AI agents stop being impressive demos and start becoming enterprise infrastructure. It is also why Sage IT views enterprise AI as an architectural challenge, not just a model choice, because lasting value comes from connecting intelligence to real business systems through the right stack.