Two years of enterprise AI experimentation have hit a wall: the production gap.

Teams learned the hard way that a dashboard can tell you how many tokens you used. It cannot tell you why your support agent suddenly costs 40% more, why your retrieval workflow starts hallucinating under live traffic, or why a model swap quietly breaks a prompt chain that worked yesterday.

That is the difference between monitoring and observability.

A dashboard reports activity. Operational infrastructure explains causality.

That distinction now matters at the executive level. As LLM applications move from pilots into revenue-bearing workflows, enterprises are no longer asking whether AI works in demos. They are asking whether it can be governed, debugged, optimized, and trusted in production.

The standard for LLM observability has shifted. It is no longer about metrics alone. It is about telemetry, traceability, control, and economic accountability across the full lifecycle of an AI system.

If you cannot trace cost, latency, regressions, and routing decisions back to a specific workflow path, prompt version, or model change, you do not have observability. You have a black box with charts.

The first place that black-box problem shows up is cost. Not total spend in the abstract, but the inability to explain where AI spend actually comes from.

The bottom line: In production AI, observability is not a reporting layer. It is operational infrastructure.

The Cost Attribution Blind Spot Is the Real Executive Problem

Most organizations still look at AI cost through an infrastructure lens: total spend, token usage, model volume, monthly growth curves. Those numbers are useful, but they are not decision-grade.

The real problem is cost attribution.

In many production environments, most LLM spend is concentrated in a surprisingly small number of agents, workflows, or prompt paths. A few badly scoped agents, verbose prompts, runaway tool loops, or inefficient multi-step sessions can silently consume the majority of the budget. Teams often discover this only after costs are already embedded.

This is the moment AI stops looking like innovation spend and starts looking like unmanaged operational cost.

CFOs do not need another dashboard showing aggregate token burn. They need visibility into the unit economics of AI:

That is the difference between “AI spend” and “AI economics.”

With our Archestra Platform Observability, enterprises can gain complete visibility into cost attribution, tracking AI spend at the workflow level. By connecting costs directly to specific agents and business outcomes, our solution empowers decision-makers to pinpoint which workflows generate the highest ROI and which need optimization to drive better profitability. This level of transparency ensures that every AI dollar spent is working towards measurable business results.

A mature observability layer should answer questions like:

Cost only becomes actionable when it is tied to business outcomes.

The bottom line: If finance cannot connect AI cost to business value at the workflow level, AI remains an expense line, not an operating asset.

What Modern LLM Observability Actually Includes

Fixing that blind spot requires observability to move below the dashboard layer and into the workflow itself. In practice, that means visibility across four levels.

1. Trace-Level Visibility

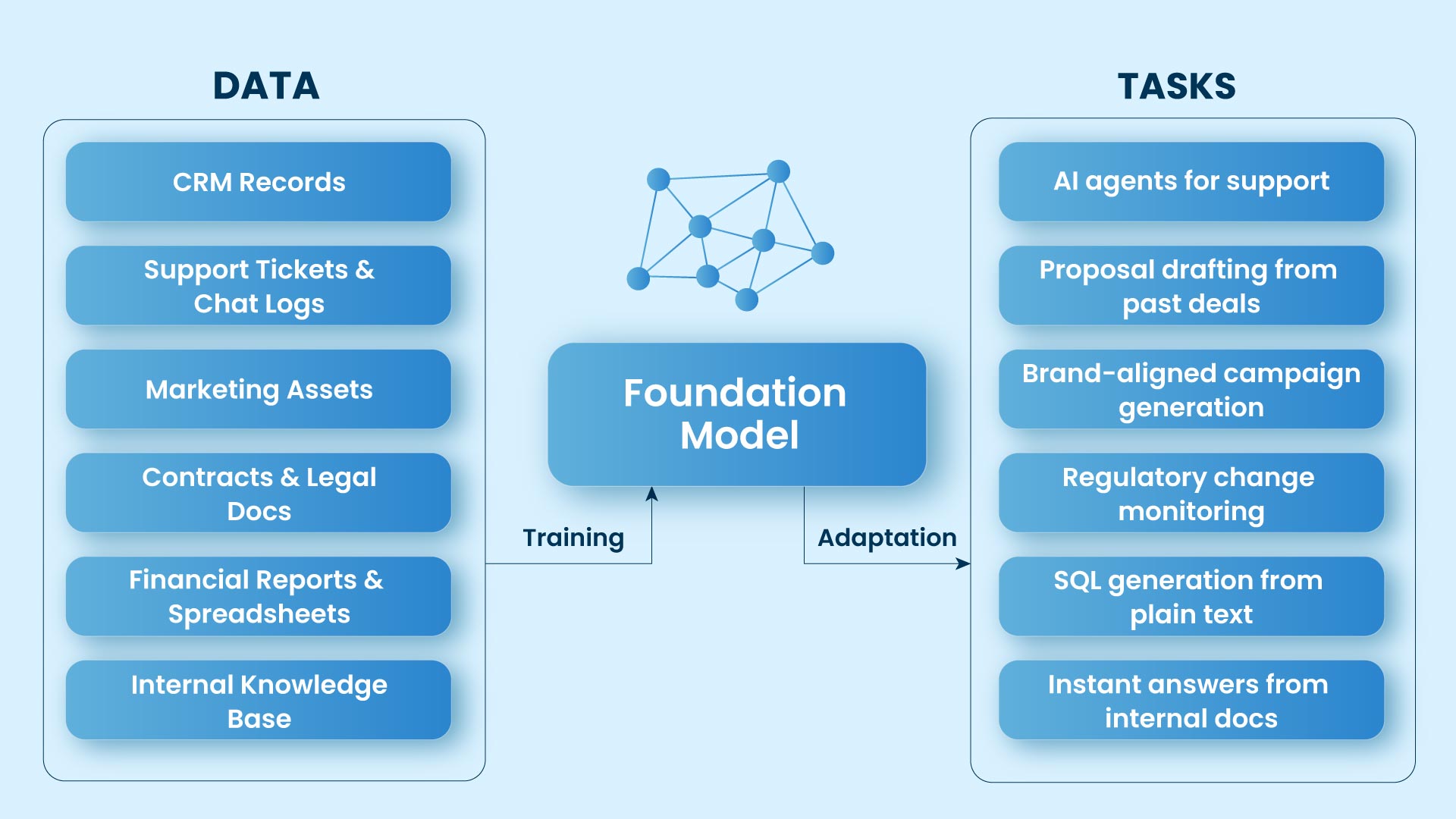

Most production AI systems are not single model calls. They are chains of prompts, retrieval steps, tool invocations, guardrails, and agent handoffs.

That means failures are rarely obvious. A spike in latency may not come from the model itself. A cost increase may come from a tool loop. A drop in answer quality may come from an intermediate prompt, retrieval failure, or orchestration error.

Teams need full traces across the system:

This is where tooling such as Langfuse and OTel-aligned tracing patterns becomes important. The point is not just visibility. It is causality.

2. Prompt Version Governance

Prompting is now an operational discipline, not a creative exercise.

A small prompt change can alter cost, completion rate, latency, and downstream tool usage. Without version control, teams are effectively changing production logic without an audit trail.

Prompt observability should track:

Prompt changes should be treated with the same seriousness as application code changes.

3. Session-Level Tracking

Single-request observability is not enough. Many problems only appear across multi-turn interactions.

A customer support copilot may work perfectly on turn one, then degrade after context accumulation, retrieval drift, or repeated tool calls. Session tracking is what exposes those long-chain failures.

It also reveals how spend actually behaves in production. Cost is often not driven by one prompt. It is driven by how long a conversation lasts, how often context is reprocessed, and how many back-end actions a session triggers.

4. A2A and Workflow Latency

In agentic systems, latency is no longer just model latency. It is workflow latency.

The delay users feel often comes from:

Without A2A latency visibility, optimization efforts focus on the wrong bottleneck.

The bottom line: If you can see response time but not the trace path that created it, you are measuring symptoms, not operations.

Multi-Provider Architectures Create a New Operational Problem

Observability gets harder, and more valuable, the moment enterprises stop standardizing on a single model provider.

The logic is sound. Enterprises want flexibility across providers for cost, resilience, quality, regional compliance, and model specialization. One provider may be better for summarization. Another may be cheaper for high-volume classification. A third may offer stronger uptime guarantees for a regulated workflow.

That flexibility creates leverage only if teams can see its operational consequences in real time.

Provider switching without redeploy sounds elegant at the architecture layer. In production, it raises harder questions:

Centralized key management and provider abstraction help, but they are not enough. Portability without traceability simply relocates risk from deployment to runtime.

The enterprise challenge is no longer model access. It is model governance under changing conditions.

The bottom line: Provider flexibility creates business leverage only when teams can observe the operational consequences of every routing decision.

Prompt Versioning Is Now a Financial Control Surface

Most teams still talk about prompt versioning as a quality issue. That is incomplete.

Prompt versioning is also a financial control surface.

A longer system prompt can increase token cost immediately. A subtle instruction change can trigger more retrieval calls. A revised tool-use policy can improve accuracy while quietly destroying margin. In multi-agent systems, one prompt modification can cascade into broader workflow cost inflation.

That is why prompt governance cannot live outside the telemetry layer.

Teams should be able to answer:

This is also where model drift monitoring becomes essential.

One of the hardest production realities in LLM systems is the non-deterministic regression. A model update, provider-side change, or version swap can break behavior without changing your application code. Prompts that worked last month can degrade quietly. Output structure can shift. Tool use can become less reliable. Latency can change with no obvious root cause.

Observability has to capture not just prompt versions, but the interaction between:

Without that, teams are flying blind through a constantly changing inference layer.

The bottom line: If you are not monitoring prompt versions against model drift, you are not managing reliability. You are absorbing randomness.

The Real Shift: Dashboards vs. Control Planes

That points to the larger strategic shift.

Observability is no longer about watching AI systems. It is about operating them.

In mature environments, the observability layer connects:

At that point, observability stops being a dashboard and starts becoming a control plane.

That is the model enterprises should be building toward. Not more charts. More operational control.

The cloud analogy is useful here. Distributed systems did not become manageable because teams had prettier monitoring dashboards. They became manageable because telemetry, tracing, and orchestration evolved into operational infrastructure.

The same is now happening in AI.

The bottom line: The companies that scale AI successfully will not be the ones with the most model access. They will be the ones with the strongest operational infrastructure around it.

The Question Enterprises Should Be Asking Now

That is no longer the most important question.

Models will change. Providers will compete. Pricing will move. Capabilities will improve. Some regressions will be visible. Others will be subtle and expensive.

The better question is this:

That is the real enterprise AI question now.

Because in production environments, observability is not a window into the system.

It is the system that makes scale survivable.